AI for Engineering Knowledge Management

Discover the Full Mechanical Engineer’s AI Dictionary for 2025. Learn the essential AI terms, from neural networks to digital twins, and see how Leo AI helps mechanical engineers integrate artificial intelligence into CAD, PLM, and everyday design workflows.

·

⏱

10 min read

Dr. Maor Farid

Maor Farid is the Co-Founder and CEO of Leo AI, the first AI platform purpose-built for mechanical engineers. He holds a PhD in Mechanical Engineering and completed postdoctoral research at MIT as a Fulbright fellow. A Forbes 30 Under 30 honoree and former AI researcher and Mechanical Engineer in an elite military intelligence, Maor leads Leo AI's mission to transform how engineering teams design better products faster.

BOTTOM LINE

Hyperparameters: Settings like learning rate and batch size that you configure before training an AI model.

Precision: The percentage of positive predictions that are correct.

Prompt: Instructions given to an AI system to generate a specific output.

Retrieval-Augmented Generation (RAG): A system that retrieves external information to augment an AI's knowledge before generating a response.

Token: A unit of text that an AI system processes (roughly equivalent to a word or a sub-unit of a word).

Foundations: The Building Blocks of AI

✔️Artificial Intelligence (AI)

AI is the science of building systems that perform tasks requiring human-like intelligence - solving problems, making decisions, and recognizing patterns.

In mechanical engineering, AI is used to automate repetitive workflows, analyze vast datasets, accelerate decisions, and optimize design processes.

Analogy: Think of designing a CNC machine - but instead of machining metal from drawings, you’re machining decisions from data.

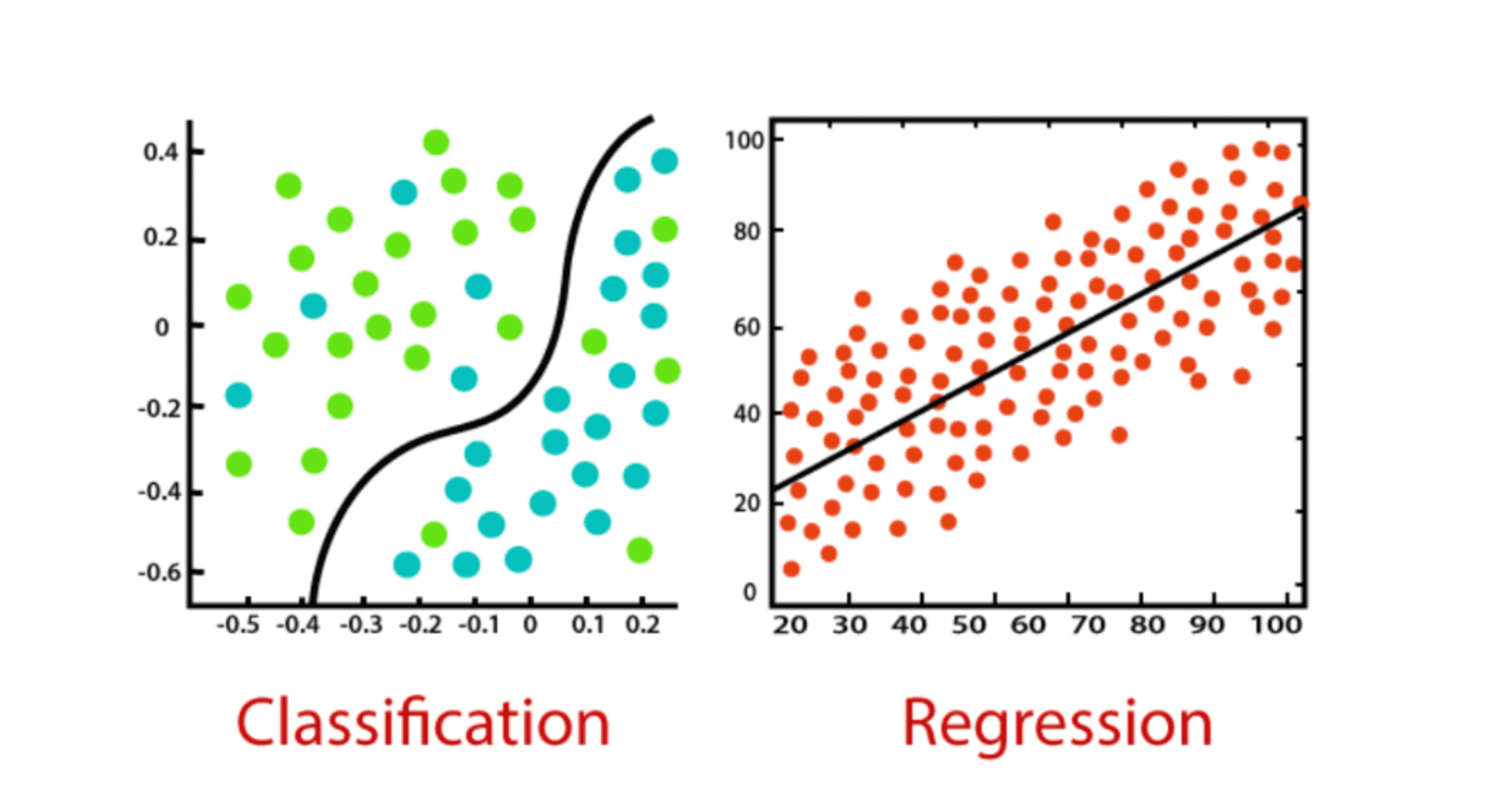

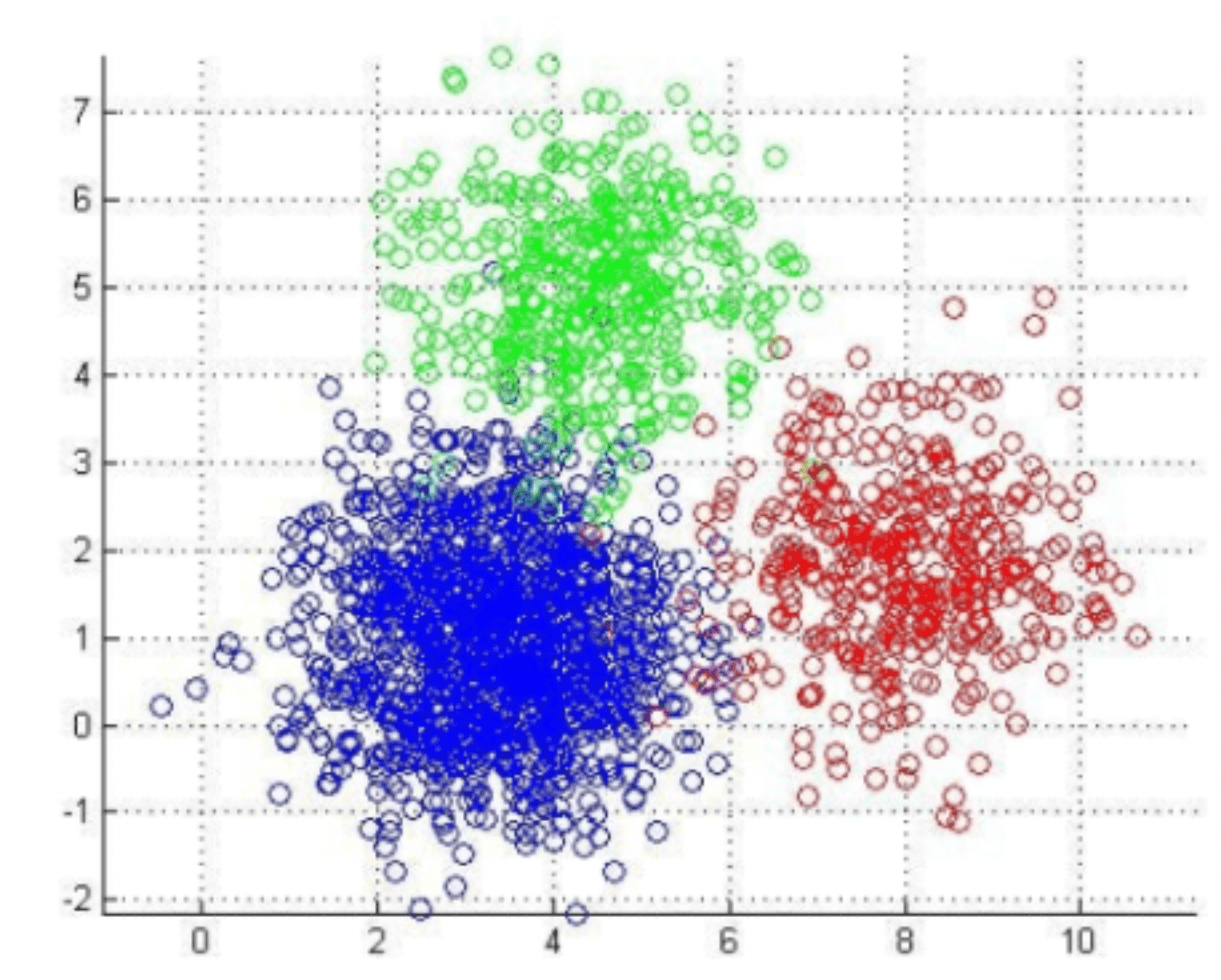

✔️ Machine Learning (ML)

Machine Learning is a branch of AI where systems learn patterns from examples rather than hard-coded rules.

Example: Detecting bearing wear from vibration signals or predicting fatigue before failure. As more data is collected, models improve, enabling engineers to reduce waste, meet performance goals, and improve quality.

✔️ Deep Learning (DL)

Deep Learning is a subset of ML that uses multi-layer artificial neural networks to extract progressively higher-level features from raw data - like refining a design from sketch → components → tolerances.

It powers quality control systems, processes sensor data in real time, detects surface defects, and evaluates material properties.

✔️ Reinforcement Learning (RL)

Reinforcement Learning is about trial and error - models learn strategies by receiving rewards for good decisions and penalties for poor ones.

RL is revolutionizing autonomous systems, robotics, vehicles, and factory automation - optimizing navigation, energy efficiency, and safety.

✔️ Model (Learning Model)

A model is a mathematical function mapping inputs to outputs.In mechanics, stress = force / area is a simple model. AI models approximate much more complex relationships - like mapping an image to the probability it contains a crack or linking design parameters to performance requirements.

IN PRACTICE

Other Architectures and Approaches

"The connection to our PDM and using that as a data source is legit the best thing ever. I found three viable bracket options fitting my exact envelope constraints — in minutes, not days."

— Eytan S., R&D Engineer

Next, How Models Work: From Inputs to Outputs

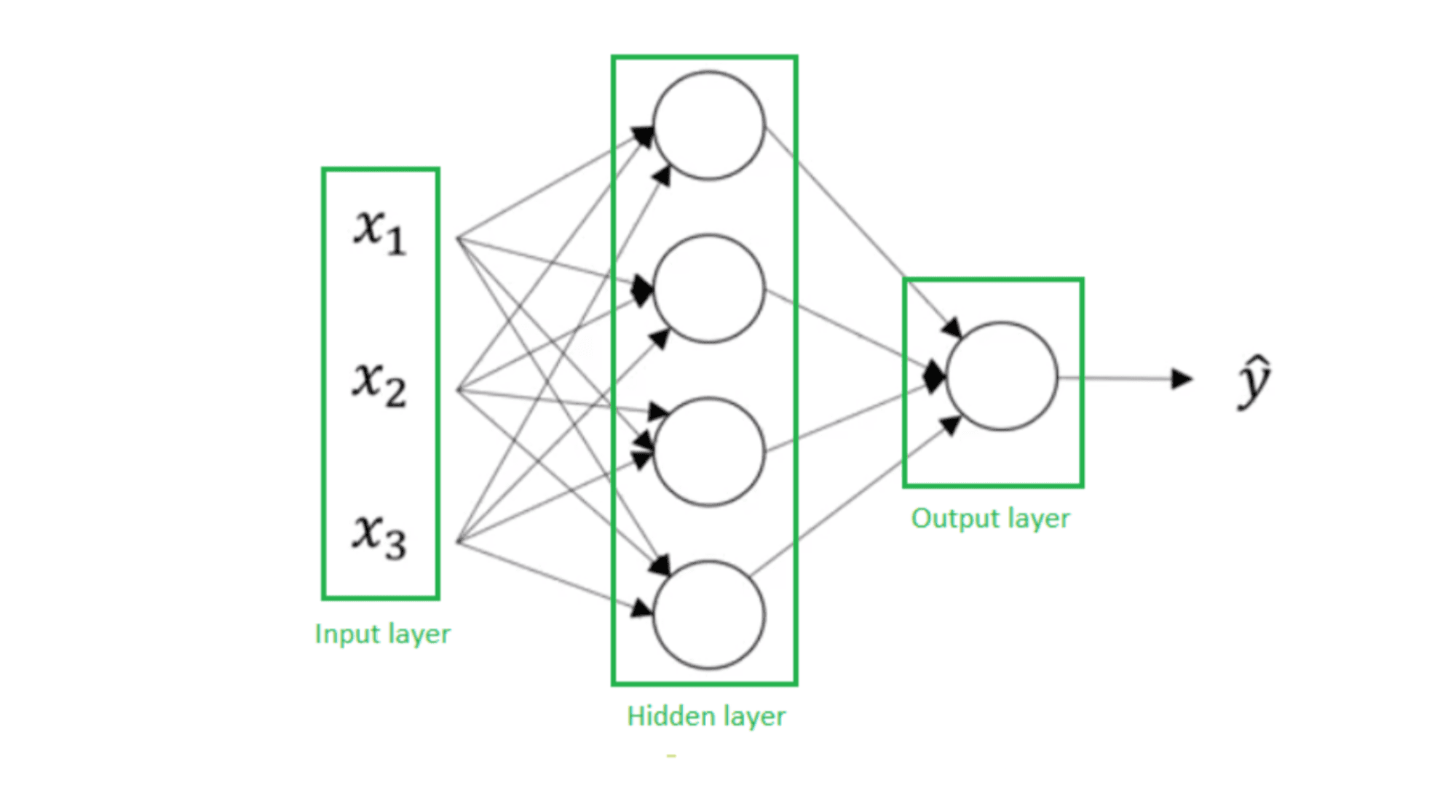

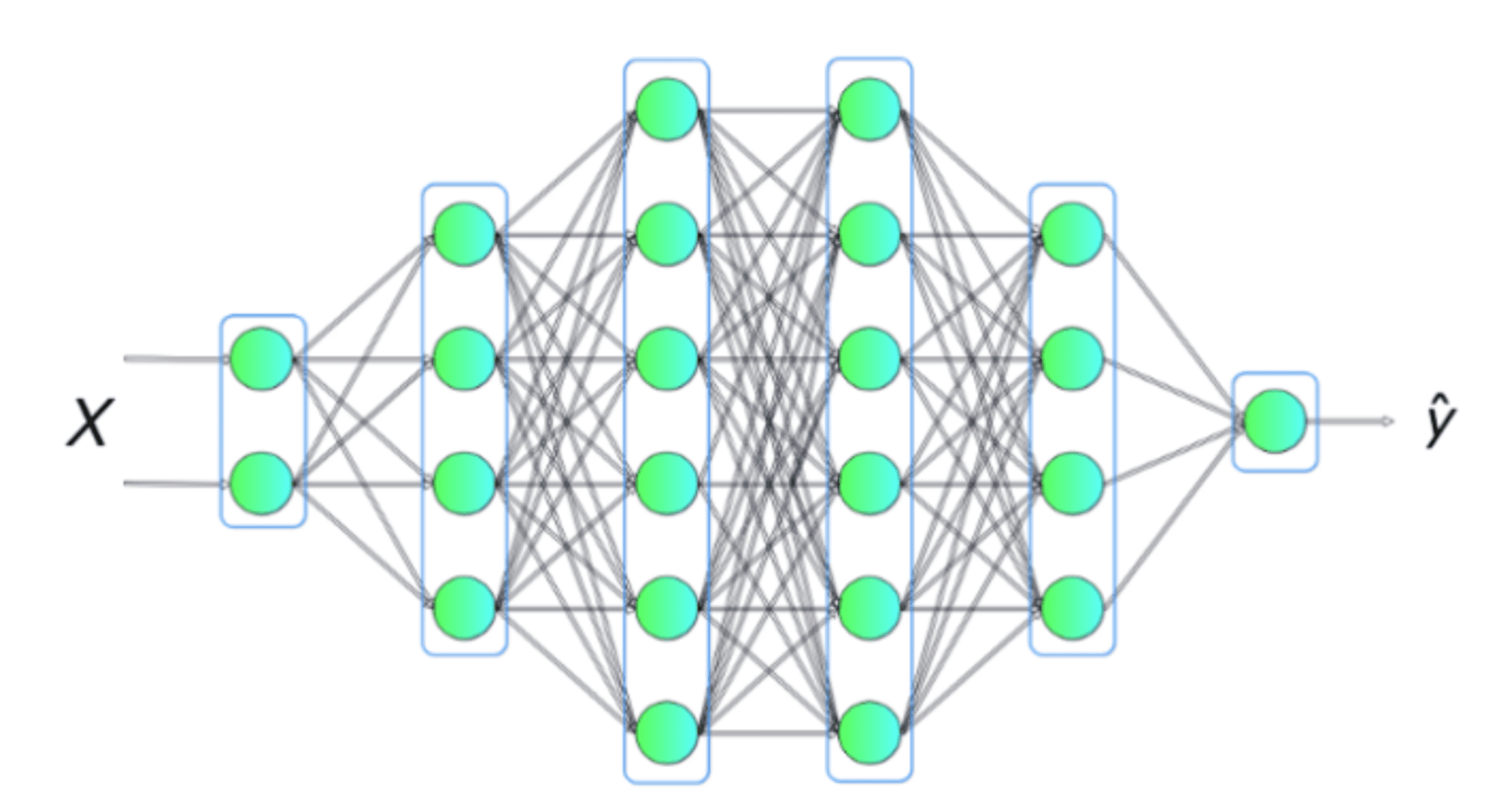

✔️ Neural Networks (NN)

A neural network is a system of interconnected nodes (“neurons”) that can represent complex relationships - much like simple linkages in a mechanism can create surprisingly rich motion.

Weights and Biases: Like spring constants and preloads, they define how each neuron responds.

Forward Pass: Inputs (e.g., an image of a gear) are transformed layer by layer into outputs (“Probability = 0.90 this is a spur gear”).

In this shallow neural network, x_i represents the inputs to the network. These elements are scalars and are stacked vertically, forming the input layer. Variables in the hidden layer are not visible in the input set. The output layer consists of a single neuron, and ŷ is the output of the neural network

A deeper neural network with four hidden layers. Think of it like a polynomial with more parameters - it can capture and learn more complex relationships between the input variables and the output value.

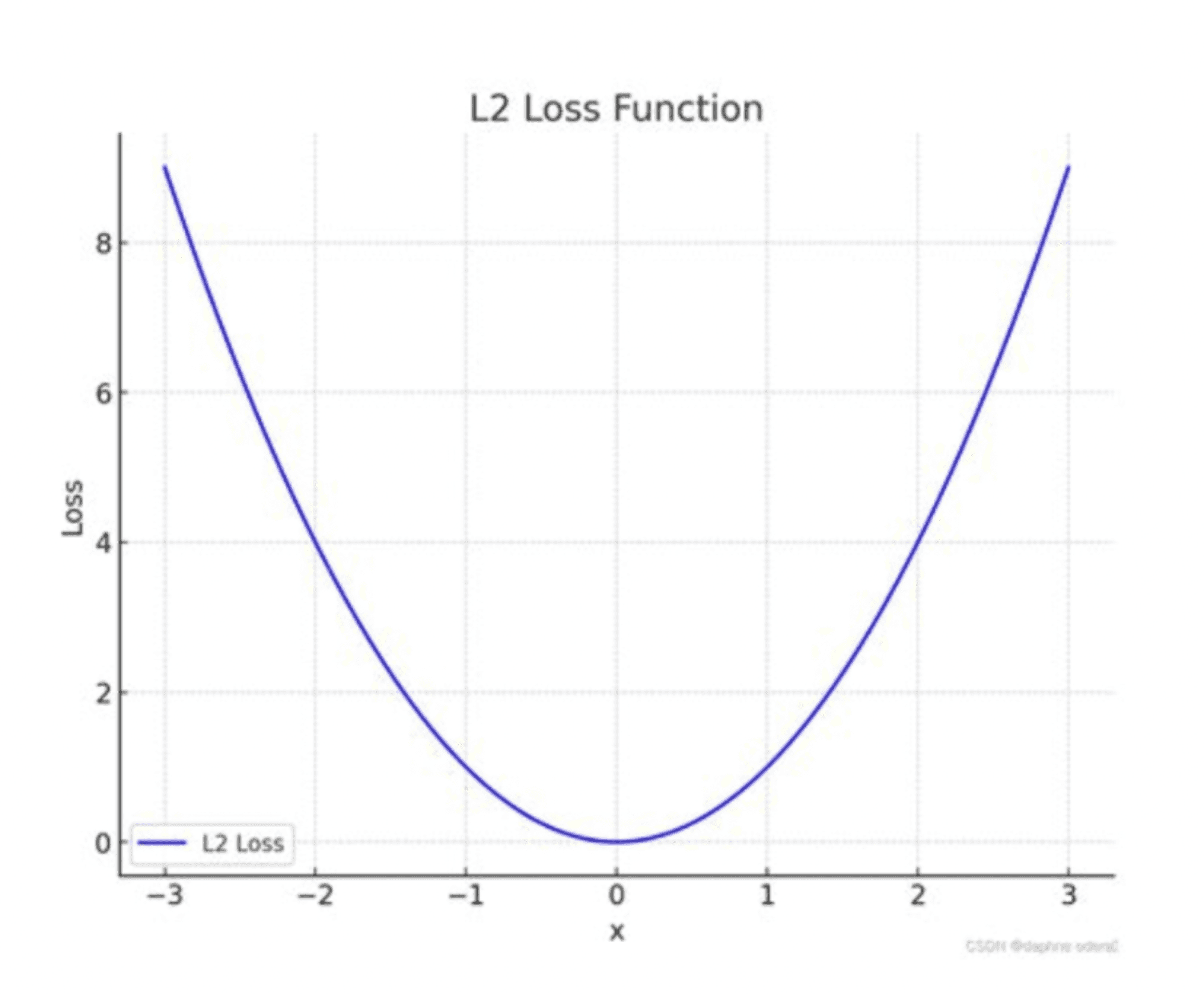

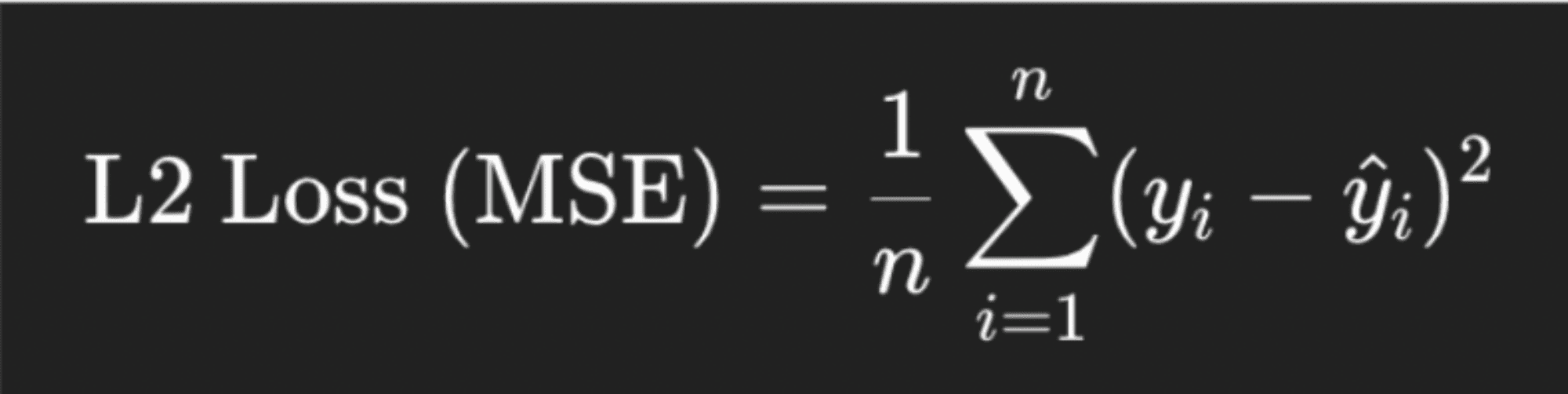

✔️ Loss Function

The loss function measures error between predictions and reality - like comparing simulation results to experimental data.

A common choice is Mean Squared Error (MSE): the squared differences between predicted and actual values averaged across the dataset.

Think of it as measuring squared deviation between predicted and actual stress at each mesh node and averaging the result.

L2 loss function - the error is calculated as the mean of the squared differences between the predicted (learned) and actual output values, also known as the ground truth (GT).

✔️ Backpropagation

The algorithm that lets models learn from mistakes. The error is propagated backward through the network to update weights and biases - like tracing a robotic arm’s error back through each joint to recalibrate it.

✔️ Optimizers

Optimizers adjust weights to minimize loss.

Gradient Descent is like walking downhill on an error surface.

Adam builds on this with momentum and adaptive step sizes for faster convergence.

Next, Training and Evaluation: From Data to Decisions

Training Set: Historical data (sensor logs, simulations, past designs) used for learning.

Validation Set: Fine-tunes hyperparameters (learning rate, layers) and improves generalization.

Test Set: Unseen data that confirms the model learned principles, not just memorized.

Typical splits: 70% training / 15% validation / 15% test.

Epochs: Full passes over the training set.

Batches: Subsets for efficiency - like testing samples instead of full stock.

Overfitting: Memorizing noise - like memorizing every stress-strain curve but failing on a new alloy.

Underfitting: Too simple - like approximating a curved beam with a straight line.

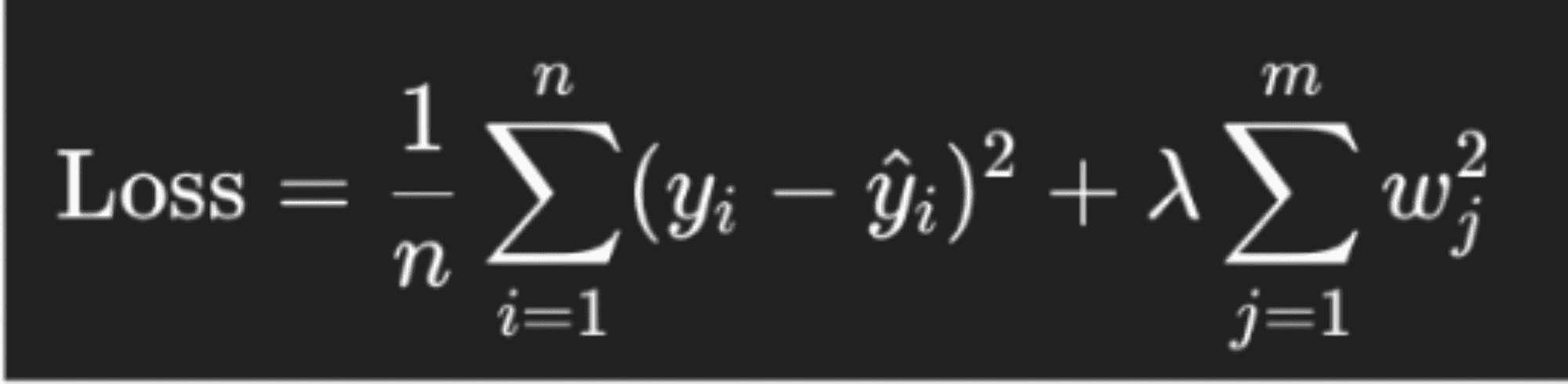

Regularization: refers to techniques that help prevent overfitting, such as limiting model complexity, adding noise, or stopping training early. In mechanical terms, it’s similar to adding damping to prevent a structure from vibrating excessively at resonance.

Loss Function with Regularization:

First term: Mean Squared Error (MSE)

Second term: L2 penalty on large weights

λ (lambda): Regularization strength

By discouraging large weights, the model learns smoother, more generalizable patterns - rather than simply memorizing noise.

Generative AI: Expanding What’s Possible

✔️ Generative Design - AI as a Creative Partner

You define performance goals, constraints, and materials - and AI explores thousands of design possibilities.The result? Lightweight, non-intuitive geometries (like lattice structures) that meet mechanical requirements and manufacturing constraints while optimizing for weight, strength, and cost.

✔️ Digital Twins - Bridging Real and Virtual Worlds

A digital twin is a continuously updated, data-driven virtual model of a physical system - a turbine, robot, or vehicle.It helps engineers simulate wear, predict failures, and address energy and biomedical challenges long before they happen - all while refining mechanical systems for optimized solutions.

FAQ

Stop Wasting Hours on Manual CAD Search

Leo AI turns your existing vault into a searchable knowledge base.

Leo AI connects to your PDM and makes every part findable by description in under 10 seconds. <a href="/onboarding">Try Leo Today</a>

Schedule a Demo →

#1 New AI Software Globally - G2 2026

Enterprise-grade security

Trusted by world-class engineering teams